xAI’s Lawsuit Puts Colorado’s AI Law on a Collision Course With the First Amendment

This case asks whether AI developers are more like plumbers or editors

The most important new AI lawsuit in the country is asking some of the biggest questions of our time: is AI-generated content protected speech? Are the design choices behind it expressive? And how far can the government go in controlling what these systems say? How these questions are answered may determine the future of AI and who gets to define truth itself.

Yesterday, xAI sued Colorado Attorney General Phil Weiser in federal court, challenging SB 24-205, the state’s controversial AI law, which is scheduled to take effect on June 30, 2026. The law requires developers and deployers of certain “high risk” AI systems to use “reasonable care” to guard against “algorithmic discrimination,” while also imposing disclosure, documentation, and notification obligations. But that dry summary badly understates what is really at stake. As we argued in our National Review essay “Don’t Teach the Robots to Lie,” laws like this are dangerous not merely because they impose compliance burdens, but because they pressure AI developers to shape their models around the government’s preferred understanding of fairness, diversity, and disparate impact rather than around truth.

Indeed, while it is intended to combat discrimination, this law actually exempts certain forms of differential treatment intended to increase diversity or remedy past discrimination. In other words, the law penalizes certain disparities while permitting outputs that support the state’s goals. The result is pressure on AI developers to align with the state’s views, which amounts to viewpoint discrimination.

That is what makes xAI’s complaint so important, and it deserves real credit for saying plainly what too many people in this debate have preferred to blur. Colorado is pressuring developers to alter training, prompts, outputs, and disclosures so their systems reflect the state’s favored moral framework. This, for example, may prevent a user from learning about other partisan or politically incorrect perspectives, or testing media bias. That gets to the core of the problem we have been warning about from the start. Once the law begins nudging AI systems away from disfavored truths and toward regulatorily approved answers, government is no longer just trying to reduce risk — it is trying to decide, in advance, what kind of reality these systems are allowed to describe.

Thus commences a major First Amendment battle over whether the state may use “algorithmic discrimination” law as a backdoor tool for steering AI outputs. That is exactly the fight we should be having now, before a pile of state laws hardens into a regime under which our most powerful knowledge-producing systems are rewarded for giving safer, flatter, more fashionable accounts of the world instead of truer ones. Once enough states head down that road, AI will go from helping us see reality more clearly to helping public officials sand down reality until it fits the ideology of the moment.

This is about more than compliance

What makes this complaint more serious than the usual industry grumbling is that it starts from a strong and necessary premise: model design is itself expressive. Decisions about what data to train on, what to optimize for, how to fine-tune behavior, what guardrails to impose, and what outputs to permit are editorial judgments as much as engineering choices.

That is the key point. We already recognize in other contexts that selecting, ranking, filtering, and presenting information can be protected expression. Editorial discretion is one of the central commitments in First Amendment law, whether it’s editing a newspaper, curating a social media feed, or crafting AI outputs. Once you understand modern AI systems as engines for selecting and structuring language, Colorado’s position starts to look much less like ordinary regulation of commercial conduct and much more like an attempt to steer speech-producing systems toward the state’s preferred moral conclusions.

Colorado presents this law as a way to prevent harm in consequential decisions related to things like housing, hiring, insurance, and health care. Uses may range from a small business generating interview questions to a mortgage broker running fraud checks. But that is exactly how speech restrictions are so often sold: not as censorship, naturally, but as reasonable safeguards against harmful consequences. The problem is that once the state starts pressuring AI systems to avoid disfavored conclusions and produce approved ones instead, it is leaning on the machinery of thought itself.

That is part of what makes Colorado’s law so troubling. It excludes from the definition of discrimination efforts to expand applicant pools to “increase diversity or redress historical discrimination.” xAI argues that this is ideological selectivity dressed up as consumer protection. Put less delicately, Colorado is taking sides in a live moral and political dispute and then trying to hard-wire its preferred answers into systems the rest of us will increasingly rely on to understand the world.

The complaint also points to something else worth noticing. Colorado’s own political leadership has already acknowledged that this law needs “additional clarity” and “improvements,” and the state delayed the effective date while arguments over revision continued. While their concern was the law’s focus on discriminatory outputs (as opposed to intentional discrimination), the delay and controversy demonstrate that the law’s central problems are more than small drafting defects easily amended later. The problem is a law with a baked-in, ideologically loaded standard that could pressure developers into laundering awkward outputs into something less accurate but more fashionable.

The electronic oracles start to act like the electronic HR representatives. They learn to hedge where they should speak plainly, evade where they should answer directly, and flatten contested questions into whatever formulation is safest."

xAI also raises a basic point that should concern anyone who cares about free speech, innovation, or federalism: Colorado does not get to set AI policy for the whole country. This law purports to reach any AI system that affects a Colorado resident somewhere in the chain of impact. Even an AI model built outside the state, trained outside the state, and used nationwide would have to bend to Colorado’s rules if any Coloradan, anywhere, is impacted.

That would be bad enough as a matter of overreach. It’s worse because it invites a race among states to impose their ideological and regulatory preferences on national AI systems. xAI’s lawsuit is, in part, a fight over whether one state may pressure the rest of the country into accepting its preferred standards for how AI systems should talk, reason, and describe the world. (After all, it wouldn’t be the first time Colorado used a law to impact an ideological debate.) If anti-discrimination law becomes a general-purpose lever for controlling AI outputs, it isn’t hard to imagine a regulatory tug-of-war where states issue contradictory (or even mutually exclusive) directives, each trying to cement an ideology, and none of them quite being the same as the pursuit of truth.

Why “algorithmic discrimination” law worries us

Our concern is simple. The danger is that “algorithmic discrimination” becomes a high-minded label for pressuring AI systems to produce outputs that conform to the moral and political preferences of whoever happens to be writing the rules.

We have seen this dynamic before. In Unlearning Liberty and Freedom From Speech, Greg wrote about the way anti-discrimination and anti-harassment rationales, however sincerely advanced, were repeatedly expanded beyond punishing actual misconduct and turned into tools for policing expression itself. What began as supposedly narrow efforts to protect people from harm too often ended up punishing satire, dissent, heterodoxy, and ordinary disagreement. The result was less about clarity or justice and more about fear, conformity, and institutions less able to distinguish truth from orthodoxy.

Now the same logic is threatening to migrate into AI, only this time it would be aimed not merely at speakers on a campus or writers in a newsroom, but at systems rapidly becoming the intellectual infrastructure of modern life. They are, in an important sense, the new libraries. And if the great knowledge machines of our age are incentivized to say not what is true, but what will not get them sued, we are all in serious trouble.

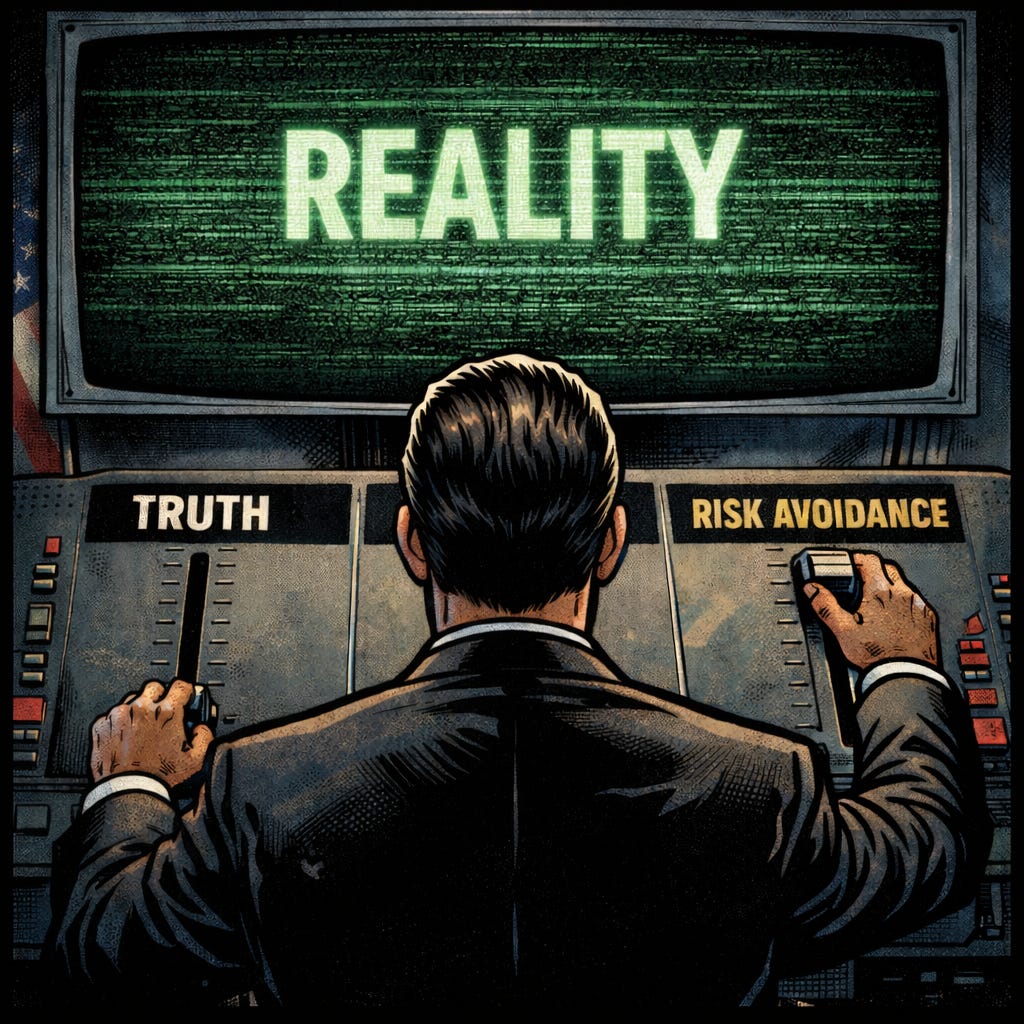

The electronic oracles start to act like the electronic HR representatives. They learn to hedge where they should speak plainly, evade where they should answer directly, and flatten contested questions into whatever formulation is safest and most regulatorily comfortable. We no longer get systems optimized for truth-seeking. We get systems optimized for risk avoidance. That’s a fundamental corruption of the project.

Free speech matters because it prevents any one faction from gaining too much control over the process by which society tests ideas and corrects error. In American politics, we divide power because we know human beings are biased, self-interested, and far too sure of their own virtue. In knowledge-producing institutions, we need the same principle. We need disagreement, criticism, rival interpretations, and the freedom to say uncomfortable things. We need, in other words, something like separation of powers around truth.

“Algorithmic discrimination” law threatens to do the opposite. It offers governments a way to pressure AI developers to treat contested moral, political, and empirical questions as though they had already been settled. It threatens to do to AI what speech codes did to campuses: use the language of protection to narrow the range of permissible thought.

That is why this case matters so much. Not because xAI is a perfect messenger, and not because every argument in the complaint is certain to prevail, but because the lawsuit forces a crucial question into the open: Are the design and tuning choices that shape an AI model’s outputs protected expressive judgments, or are they merely a form of conduct that officials may pressure into saying approved things in approved ways? Are developers exercising editorial discretion, like newspaper editors, or are they just building systems, like plumbers?

If the answer is that these judgments are protected expression, Colorado’s law has a serious constitutional problem. If the answer is no, then the rest of us do. Because it will become much easier for states to turn “algorithmic discrimination” into a general theory of AI control. And at that point, asking an AI model a question may no longer yield the best account of reality it can generate. It may yield only the version of reality that regulators are willing to tolerate.

At FIRE, this is one reason we are expanding our work on technology and free speech. The old fights over campus speech, censorship by bureaucracy, and the abuse of anti-discrimination rationales are migrating into the systems that increasingly mediate how people learn, argue, research, and think. If those systems are trained to fear liability more than they value truth, the damage will extend far beyond Silicon Valley to reach classrooms, workplaces, journalism, science, and democratic culture itself.

If you want to help us fight this, support FIRE. We are expanding our work on technology and free speech, and that will take money as well as good plaintiffs, strong sources, and reporters paying attention. If you are in a position to help on any of those fronts, we want to hear from you.

Shot for the Road

I’m very excited to be back in Vancouver at TED2026 next week. If you haven’t already seen it, please check out my TED talk from last year and take a peek behind the curtains to see how I prepared!

Colorado just can't seem to help itself when it comes to passing laws that violate the 1st amendment.

I'm a free speech absolutist, as there is no other viable option for maintaining a free Republic. Let each AI use whatever algorithms it creators choose. Eventually, they will all develop a reputation for honesty or lack there of, much like the NYT, WSJ,and WAPo which are not to be trusted.

Dick Minnis

removingthecataract.substack.com